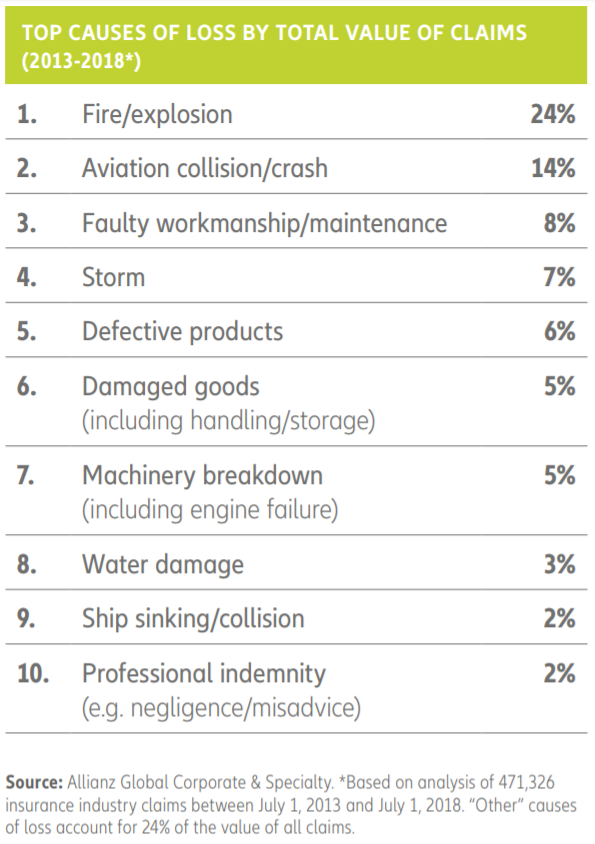

When the unforeseen strikes, insurance practices everywhere are left holding their breath as they lie in wait for the dreaded number – the damage loss estimates – to come in. These numbers are astronomical, to say the least. Almost 70% of all business financial losses arise from only ten circumstances – just ten! with the single largest identified cause being losses resulting from fires followed by aviation crashes and human-related errors.

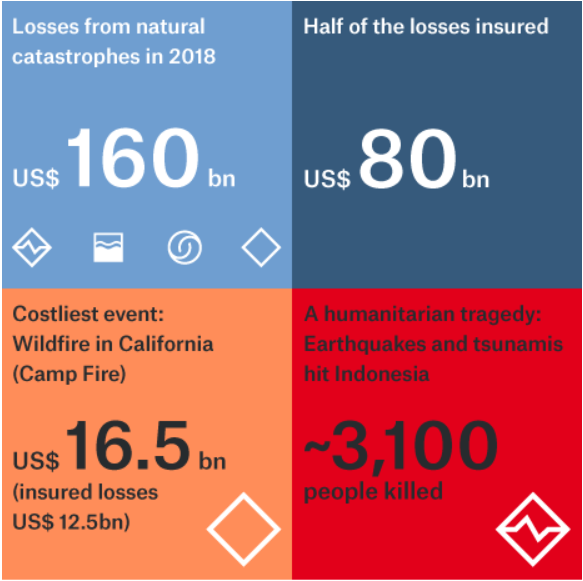

Last year saw several natural catastrophes that triggered high insured loss amounts, including the California wildfires, and tropical cyclones that passed through Japan, the Philippines, the US and China. Now, insurers around the World are growing increasingly anxious, given the alarming frequency of occurrences in the past decade alone. The economic costs of last year’s 394 natural catastrophe events came up to $225B with insurance covering $90B of the overall total, creating the fourth costliest year on record of insured losses!

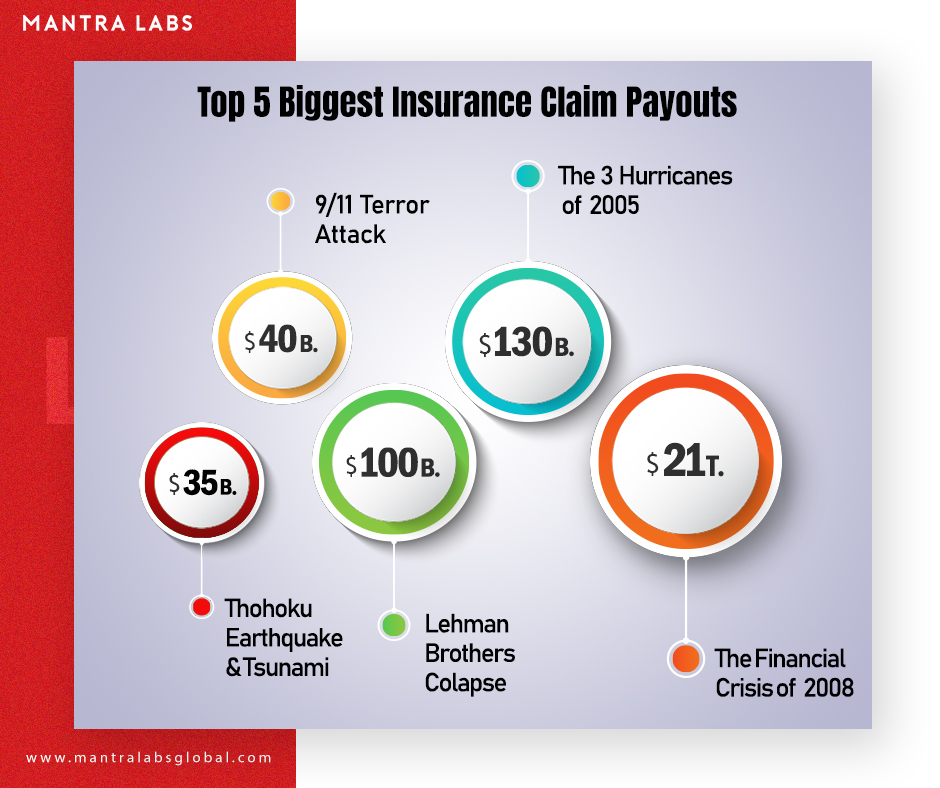

Regrettably, when the unforeseen strikes there is a severe loss to both life and property – and hence the substantial loss claims they create. While these figures are in no doubt staggering, they are merely to illustrate the incredible gap between those described above and the largest insurance payouts ever recorded. Here are the top five payouts, in order of value.

- The Tohoku Earthquake & Tsunami of 2011

In March of 2011, at closer to three following noon, a 9.1 magnitude earthquake struck off-the coast of Japan. Within the next 30 minutes, while the aftermath of destruction was still being felt, 133 ft. waves rocketed into the sky from the ocean and travelled 10km inland, taking the lives of over fifteen thousand people. While the damages, for the earthquake alone, were estimated over $210B, only $35B was insured and ultimately paid out. The total combined payouts could be much higher.

- 9/11 Tragedy

One of the most infamous and tragic terrorist attacks on a nation’s sovereign soil that will forever be entrenched in mankind’s memory. Soon after, ‘terrorism risk insurance’ became incredibly risky to cover for insurers. Congress reacted by passing the Terrorism Risk Insurance Act in 2002, which provided an assurance of government support after a catastrophic attack. The tragedy caused far-reaching damages that were difficult to estimate, triggering insurance payouts as much as $40B.

- Lehman Brothers Collapse

At one point, the fourth largest investment bank in the U.S, the 158-year-old firm declared bankruptcy in 2008 after their involvement in shorting subprime mortgage loans through mortgage-backed securities sold in the secondary market from where the risk spread everywhere else. They filed for Chapter 11 protection after an exodus of most of its clients, and the devaluation of its assets by credit rating agencies. The insurance payouts to creditors, taxpayers and private investors totalled over $100B.

- The Three Hurricanes of 2005

Three fierce, category-5 hurricanes: Katrina, Rita, and Wilma – hit the U.S., along with 28 other storms in 2005 causing massive damage across the lower half of the country. The storms moving at speeds exceeding 205km/hr caused damages to the tune of $169B. The insurance payouts for Hurricane Katrina alone totalled $45B. It is still one of the costliest natural disasters ever recorded in American history, with a total insurance payout of around $130B.

- The Financial crisis of 2008

The global recession of 2008, that spread worldwide from the epicentre of the financial collapse in Wall St. triggered the greatest losses to both companies, individuals and families ever seen in the last hundred years. There is said to be a direct line between the actions of Lehman Brothers in the subprime mortgage crisis to the financial bedlam that endured worldwide, soon after. The payouts incurred by American insurers during that time, although a financially guarded secret, is believed to be as much as $21T – yes that’s T as in, a whopping ‘Twenty-One Trillion Dollars!’

While $89B of the overall insured total of $90B was borne from weather-related disasters, insurers are actively monitoring climate change reports to take in a bigger view of the changes the planet is undergoing – following two back-to-back years of mega catastrophe-event losses.

The ‘Insurance Protection Gap’ or uninsured losses (the lower this value, the better), is a global problem that affects emerging nations and developed countries alike. Properties and economies with high insurance penetration recover much more quickly after a natural disaster than economies that rely on governments for their recovery.

The re/insurance industry continues to withstand the payouts backed up with $595B of capital. However, their focus will be on managing the cost of climate change and weather events by helping to further reduce the current protection gap of 60%.

References & Further Reading

https://www.agcs.allianz.com/news-and-insights/news/global-claims-review-2018.html

https://www.munichre.com/en/media-relations/publications/press-releases/2019/2019-01-08-press-release/index.html

https://www.insurancejournal.com/news/international/2019/01/22/515420.htm

https://www.mckinsey.com/industries/financial-services/our-insights/claims-in-the-digital-age

https://www.insurancejournal.com/news/international/2018/01/17/477266.htm

Knowledge thats worth delivered in your inbox