IBM reports that globally businesses spend over $1.3 trillion/year to handle roughly 265 billion customer calls. Chatbots spring up to minimize the expenditure on handling customer queries, especially the most redundant ones.

It’s quite common for businesses to assess the return on investment before adopting new technology.

However, ROI from chatbots may vary according to the purpose it serves. For example, an insurance chatbot ROI differs from that of an HR chatbot. Here are certain parameters to consider for calculating the return on investment from chatbots.

#1 Average Human Live-chat Cost

The total number of tickets raised per month and the number of agents involved gives an idea of the average price per contact.

According to Help Desk Institute, the average cost/minute for a live chat is $1.05, while the average cost per chat session is $16.80. Assuming an organization handles 10,000 chats in a month, the cost incurred sums up to $168,000/month.

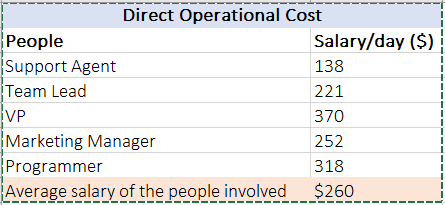

Depending on the number of people involved and their compensation, you can calculate the amount you’re spending on your organization’s customer support. Here’s a salary reference, which can be used in further calculations.

The salaries mentioned are referred from Job Futuromat 2019 wrt 12 months, 18 working days, 8 hours.

The actual operational cost also depends on material resources invested like office space, conveyance, communications, gadgets, etc. You can consider these aspects on your chatbot ROI calculator.

#2 Bot Installation Cost

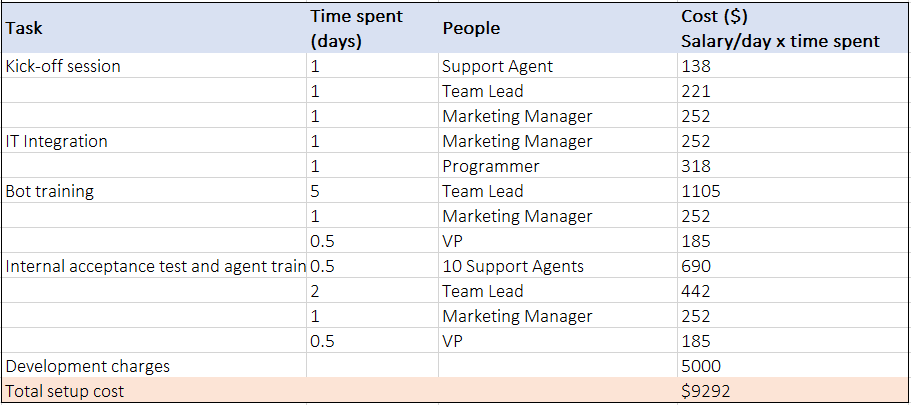

The phases of bot installation cost involves brainstorming sessions, integration, and training both bots and agents.

During kick-off sessions, stakeholders discuss the scope of the bot, define goals and responsibilities, and make a project plan. After this, programmers and managers integrate the bot on the organization’s website and other platforms. Customizing the bot according to the client’s support cases covers the bot training phase. Testing the bot and training agents to use it are also factored into the ‘bot’ installation costs.

According to Ometrics, the average development charge for a chatbot may range from $1,000 to $5,000. But, this is a one-time charge, and after that the bot-developer may bill for maintenance charges.

If the chatbot requires a higher level of customization, then the bot-developer may also claim additional charges. Also, the number of days spent for bot installation varies according to industries and organizations.

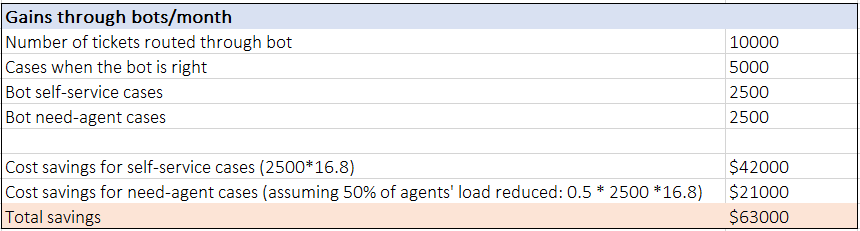

#3 Gains through Bots

Here we’re assuming all the customer queries are routed through the bot and it is accurate 50% of the time. Out of the 50% queries handled by a bot, if half of them are self-served and the remaining required human intervention, then monthly gains from the bot can be-

You can find the exact cases and accuracy from your bot’s analytics dashboard.

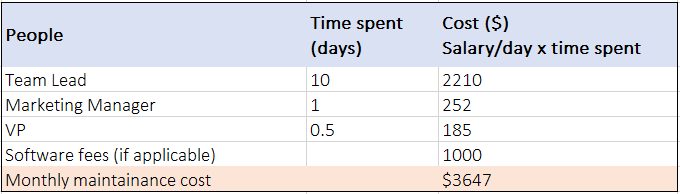

#4 Monthly Maintenance Cost

Like humans, bots also require human assistance for its successful operation. Its monthly maintenance cost is a summation of the organization’s human resources it needs and developer’s charges. Here, let’s assume a chatbot maintenance fee, which ranges from $100 to $1,000 a month. Similar to the bot development charges, maintenance fees vary according to bot capabilities.

#5 Chatbots Return on Investment Calculation

The return on investment is a ratio of benefit from the investment to the cost of investment. It evaluates the efficiency of an investment. Mathematically, ROI = (Current Value of Investment – Cost of Investment) / Cost of Investment.

Since chatbots incur a one-time development cost and recurring monthly maintenance cost, here’s the chatbot ROI calculation from both perspectives.

Chatbot ROI during the first month: This includes the bot installation charges.

For the above case,

ROI = (Gains through bot – Installation charge – maintenance charge)/(installation charge + maintenance charge)

ROI = ($63,000 – $9,292 – $3,647)/($9,292 – $3,647)

ROI = 3.9 or 390%

Chatbot ROI after the first month: This excludes the bot installation charges.

For the above case,

ROI = (Gains through bot – maintenance charge)/(maintenance charge)

ROI = ($63,000 – $3,647)/($3,647)

ROI = 16.3 or 1630%

Using this method, you can build your own chatbot ROI calculator considering your own business parameters.

NLP and AI-powered chatbots can yield a better return on investment. For instance, Religare has incorporated a service chatbot on its Web portal and WhatsApp integration to handle customer queries. It has resulted in 10 times more customer interaction and 5 times more sales conversion.

Conclusion

For the above case, where bots are able to handle 50% of customer queries, there’s a direct 50% capital gain to the organization. The human-time saved can be utilized for more productive tasks, which can eventually accelerate the organization’s productivity.

Powerful bots result in better success rates for customer facing operations. For example, Diageo’s iDia chatbot has led to a 55% drop in help desk tickets.

Here are more enterprise chatbot use cases.

Researchers predict that by 2025, chatbots will accomplish more than 90% of the B2C interactions. Also, chatbots can cut operational costs by more than $8 billion per year in the next three years.

AI in Insurance will value at $36B by 2026. Chatbots will occupy 40% of overall deployment, predominantly within customer service roles.

DOWNLOAD REPORT

We specialize in developing industry-specific AI-powered chatbots. Drop us a word at hello@mantralabsglobal.com to learn more.

Knowledge thats worth delivered in your inbox