The motor insurance market in India is approximately Rs 70,000 crore in terms of Gross Written Premiums. With newer and stricter regulations more and more people are buying motor insurance. However, while motor insurance, in general, has grown by 16% over the last year, the new digital insurers in the marketplace have seen their premiums increase by 4X-6X.

This underlines a shift in the way customers choose to buy motor insurance – from the convenience of their smartphone or computer, instantly. There is no reason to think that they would not want to settle an insurance claim in the same convenient manner. Fortunately, machine vision technology solves claims settlement challenges to a great extent.

Current Claims Process

Let us have a quick look at the current claim settlement process for motor insurance. Once the accident occurs, the insured has to follow the following steps:

- The insured informs the insurance company about the accident. Subsequently, the insured files a physical claim along with the required documents such as RC, DL, insurance policy, bills, receipts, etc.

- A surveyor gets assigned by the insurance company to examine the damaged vehicle.

- The surveyor ascertains the reason and the extent of the loss. After this, the insurer sends an approval/rejection of the claim/amount.

The above process is not only time consuming and stressful for the insured but also expensive for the insurer due to physical inspection and other manual checks and balances. The higher cost of processing the claim makes business less profitable to the insurer. The inconvenience and long wait make the product less desirable to the customer.

As more and more people buy motor insurance online, the customer expectation from the claim settlement process is changing as well. Customers now expect a seamless digital claim settlement process preferably in a matter of hours if not minutes, instead of the present industry standard of several days.

A Machine Vision Solution to Instant Claims Processing: FlowMagic

We at FlowMagic set out to solve this problem both for the insured and insurer using the power of artificial intelligence. We have used machine vision to eliminate the need for the surveyor in all but the most complex cases.

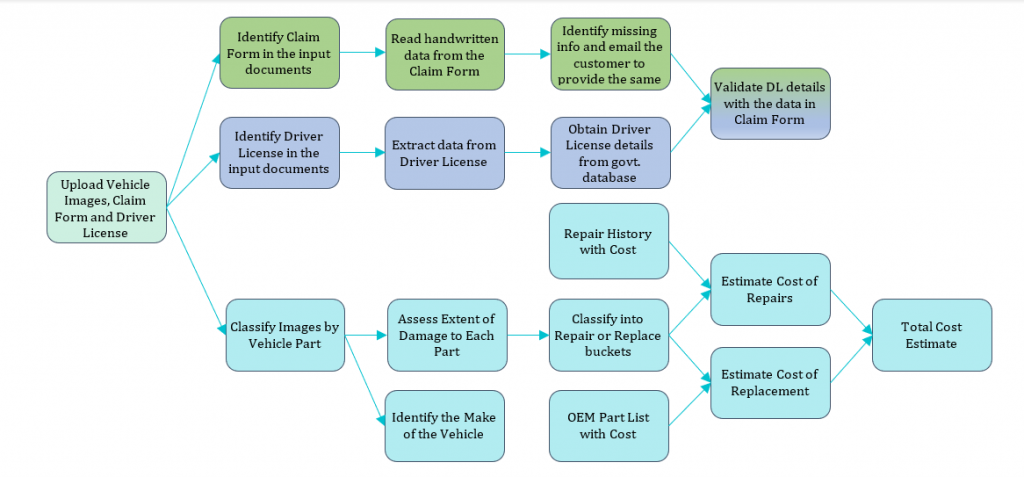

Using machine vision, we can process a car image and identify not only the damaged parts but also the severity of damage to those parts and whether it requires repair or a replacement. We have further analyzed repair cost data and images from tens of thousands of accident cases to build an Artificial Intelligence Costing Model that can estimate the cost of repairing any part just by looking at its photograph. All this means that the insurer doesn’t need the surveyor and other manual checks in most cases and the customer can submit a claim from the convenience of his smartphone and get an approval decision within minutes.

New Claims Settlement Process with FlowMagic

- After the accident, the customer clicks photographs of damaged parts of the car and uploads them on the app along with a photo of DL/RC.

- The AI model verifies the DL/RC information and estimates the extent of damage to the car and whether the damaged parts need to be replaced or repaired. The model further calculates the cost of repair and/or replacement and informs the customer/insurance company.

- Based on the outcome of the DL/RC verification and the repair estimate the claim is either auto-approved in minutes or forwarded to a claims adjuster for review.

All the stakeholders in the insurance value chain can use our solution and benefit from it.

Insurance Company: By integrating this solution with mobile applications, Insurance companies can get quick claims intimations and a reasonable estimate of the repair cost. The damage severity analysis also helps the insurance company negotiate with the garage on whether a part needs repair or replacement.

Service Center or Garage: Multi-brand garages or service centers can quickly assess the level of damage to any car brought to them through machine vision-based FlowMagic. Accordingly, they can send a quick quotation to the insurance companies. The insurance companies can trust this quotation as it is generated by a robust AI model.

End Customer: An end customer can also use our free mobile application to get a repair estimate. This can be a starting point for an informed negotiation with a garage.

To learn more about how FlowMagic can transform the way you settle your motor insurance claims or discuss your broader AI goals, please get in touch with us at hello@mantralabsglobal.com

Also read – How AI can settle insurance claims in less than 5 minutes!

About author: Himanshu Saraf is a Capital Markets Director at Mantra Labs. He also leads Artificial Intelligence (AI) and Machine Learning initiatives in the company.

Knowledge thats worth delivered in your inbox