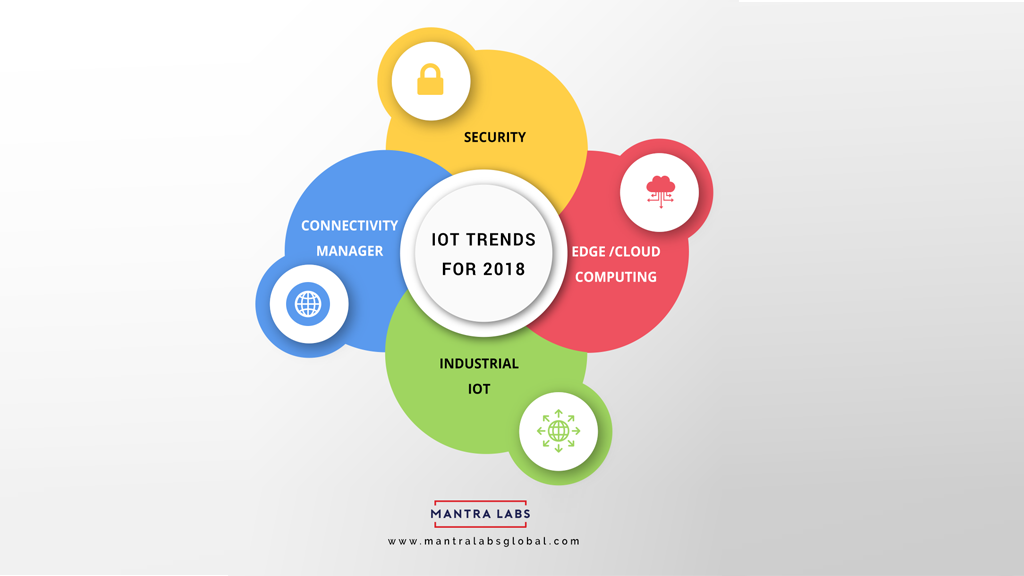

We spoke with a number of IT leaders and industry experts about what to expect from IoT in the coming year and what could be the latest trends for IOT which will dominate 2018.

Following are the Internet of things trends to watch out for in 2018.

1.The IOT industry will bring a changed awareness around security and risk:

Security concerns will be high on the list. We have reached a point in the evolution of IoT when we need to re-think the types of security we are putting in place. Have we truly addressed the unique security challenges of IoT, or have we just patched existing security models into IoT with the hope that it is sufficient?

IOT presents a different kind of risk. Businesses need to understand that sensors and machine-to-machine communications are also stored in the cloud. In particular, facilities implementing devices connected to the IoT need to think about communication and the security protocols between devices: sensor-to-sensor communication, sensor-to-gateway communication, and updating and maintaining all on-premise equipment to better secure their data.

Tom Smith is a research analyst for DZone.com and he queried these IT professionals to get their insights on predictions for 2018. Here’s what IOT experts shared their thoughts on IoT trends for 2018.

IoT security will continue to dominate as a major concern, and I would expect the rise of several IoT-driven platforms to rise to the surface in an attempt to address and manage this. Says Lucas Vogel, Founder, Endpoint Systems

My hope is that there will be some adopted regulations around IoT security and compliance, otherwise, there will undoubtedly be more frequent and massive attacks. The fully-connected home will move closer to being a reality, and there will be unique solutions that address actual needs instead of just being “internet-connected”. Says Mike Kail, CTO, CYBRIC

2. Businesses will need to embrace the implementation of edge and cloud computing:

Edge computing, also known as fog computing, will continue to rise. The ability to run software at the edge is turning out to be one of the most promising accelerators of IoT adoption, given the cost savings and the ability to quickly achieve largescale systems.

3. Connectivity Management:

Another exciting new area involves the management of whole IoT systems or solutions. Device management and connectivity management has been around for several years already, but now that the pieces of IoT systems are coming together to form whole enterprise-scale solutions, management of these solutions has become higher up on the “tech wish list” for organizations.

4. IOT vs IIOT:

In addition, the separation between consumer IoT and Industrial IoT is becoming clearer all the time. One key distinction that is now apparent is that consumer IoT can often focus on greenfield installations but IIoT must enable brownfield installations. The investments in systems and equipment that were made by industrial firms over the last decades will continue to be in place and will need to be incorporated into IIoT solutions.

We’re seeing a trend towards a lot more IIoT use cases. As we move into 2018, we will see a much higher adoption of industrial IoT where sensors are making a big impact in the manufacturing, automotive, aerospace and engineering sectors. Other areas where we expect greater uptake of IoT systems include shipping, retail, agriculture, and healthcare. This expansion will trigger a need to hire many more IoT professionals and will likely see the rise of many new types of IoT specific roles within companies.

Many verticals still have business operations that involve manual observation of equipment status, inventory levels, and other key metrics. Where there is currently manual observation, there may be a great opportunity for a high-ROI project involving IoT. Some verticals that have a lot of manual observations are Oil & Gas, Energy Distribution, Supply Chain, and Telecommunications. The repeating theme is high-value infrastructure that is spread out geographically.

Thanks Kilton Hopkins, IoT Program Director forNortheastern University-Silicon Valley and the CEO of IOTRACKS, for providing your inputs to this article.

Knowledge thats worth delivered in your inbox