The antiquated commodity of Financial ‘Coverage & Protection’ is getting a new make-over. Conventional epigrams like ‘Insurance is sold and not bought’ are becoming passé. Customers are now more open than ever before to buying insurance as opposed to being sold by an agent. The industry itself is witnessing an accelerated digitalization momentum on the backs of 4G, Augmented Reality, and Artificial Intelligence-based technologies like Machine Learning & NLP.

As new technologies and consumer habits keep evolving, so are insurance business models. The reality for many insurance carriers is that they still don’t understand their customers with great accuracy and detail, which is where intermediaries like agents and distributors still hold incredible market power.

On the other hand, distribution channels are turning hybrid, which is forcing carriers to be proficient in their entire channel mix. Customer expectations for 2020 will begin to reflect more simplicity and transparency in their mobility & speed of service delivery.

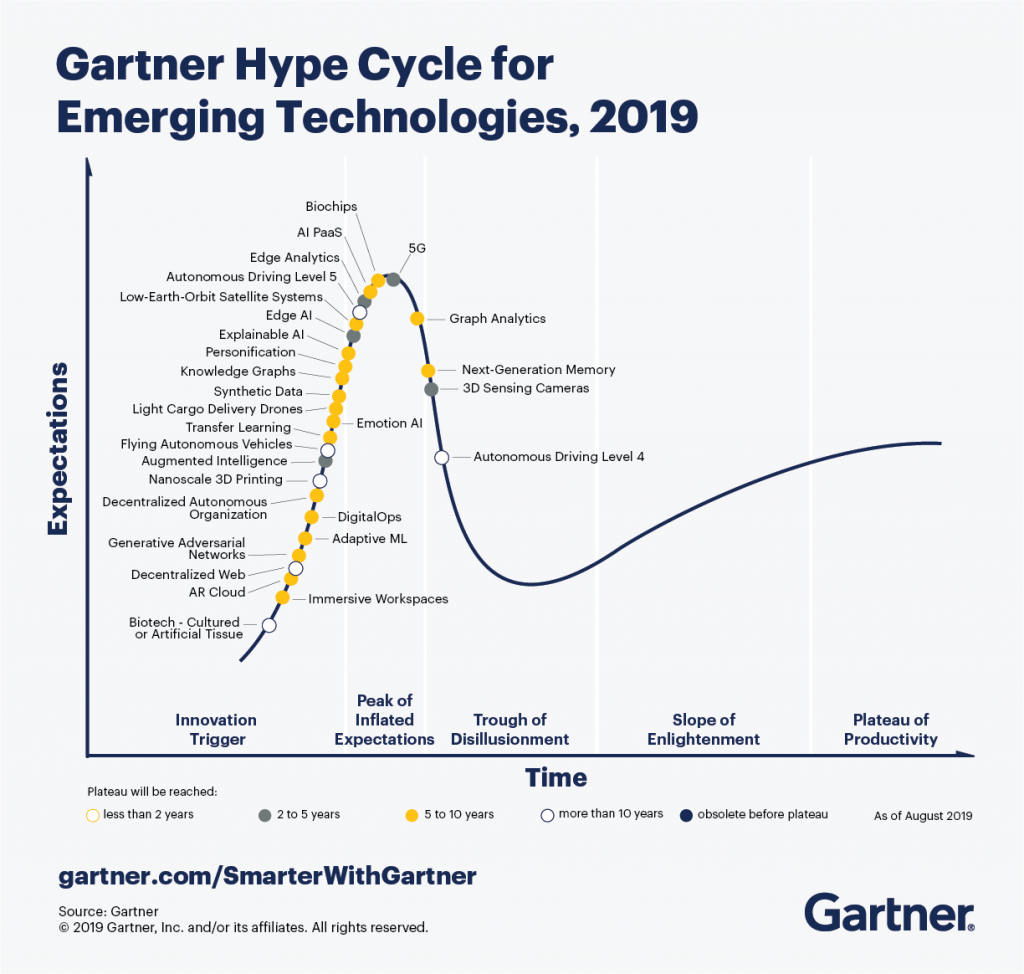

A recently published Gartner Hype Cycle highlights 29 new and emerging technologies that are bound for greater business impact, that will ultimately dissolve into the fabric of Insurance.

For 2020 and beyond, newer technologies are emerging along with older but more progressively maturing ones creating a wider stream of opportunities for businesses.

Irrespective of the technology application adopted by insurers — real, actionable insights is the name of the game. Without it, there can be no long term gains. Forrester research explains “Those that are truly insights-driven businesses will steal $1.2 trillion per annum from their less-informed peers by 2020”.

Based on the major trends identified in the Hype Cycle, 5 of the most near-term disruptive technologies and their use cases, are profiled below.

- Emotion AI

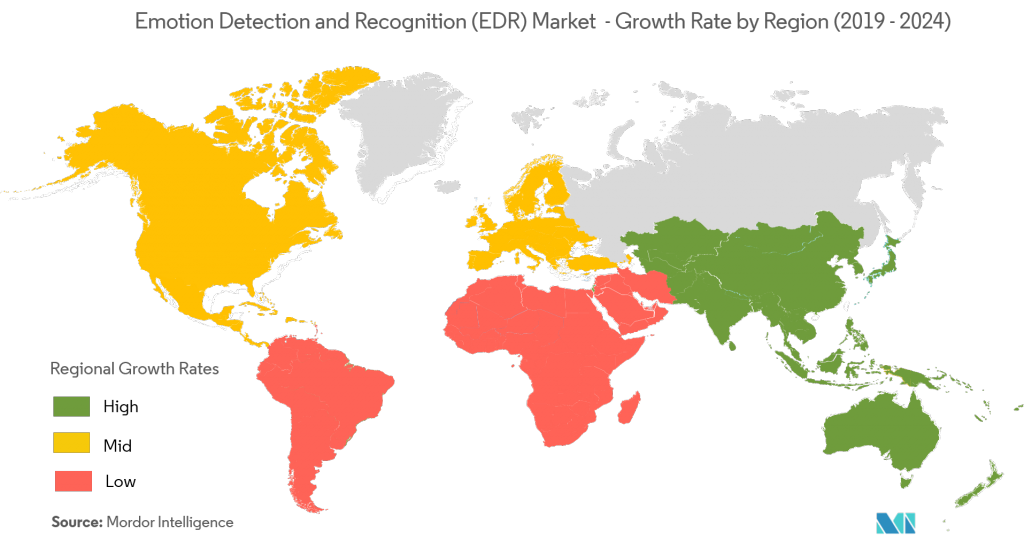

Emotion Artificial Intelligence (AI) is purported to detect insurance fraud based on the audio analysis of the caller. This means that an AI system can decisively measure, understand, simulate and react to human emotions in a natural way.

For Insurers, sentiment and tone analysis captured from chatbots fitted with emotional intelligence can reveal deeper insights into the buying propensity of an individual while also understanding the reasons influencing that decision.

Autonomous cars can also sensors, cameras or mics that relay information over the cloud that can be translated into insights concerning the emotional state of the driver, the driving experience of the other passengers, and even the safety level within the vehicle.

Gartner estimates that at least 10% of personal devices will have emotion AI capabilities, either on-device or via the cloud by 2022. Devices with emotion AI capacity is currently around 1%.

- Augmented Intelligence

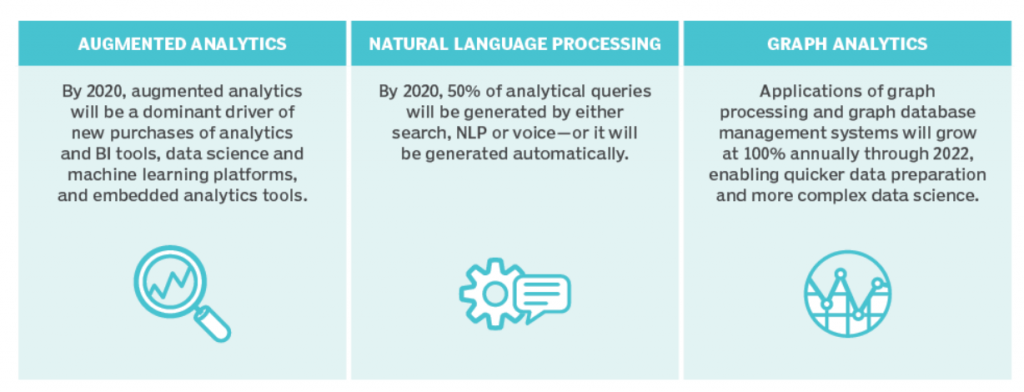

Augmented Intelligence is all about process intelligence. Widely touted as the ‘future of decision-making’, this technology involves a blend of data, analytics and AI working in parallel with human judgement. If Scripting is rules based automation, then ‘Augmenting’ is engagement and decision oriented.

This manifests today for most insurance carriers as an automated back-office task, but over the next few years, this technology will be found in almost all internal and customer facing operations. Insurers can potentially offer personalised services based on the client’s individual capacity and exposure to risk — creating opportunities for cross/up-selling.

Source: Gartner Data Analytics Trends for 2019

For instance, Online Identity Verification is an example of a real-time application that not only enhances human’s decision making ability, but also requires human intervention in only highly critical cases. The Global value from Augmented AI Tools will touch $4 Trillion by 2022.

- AR Cloud

The AR Cloud is simply put a real-time 3D map of an environment, overlayed onto the real World. Through this, experiences and information can be shared without being tied down to a specific location. Placing virtual content using real world coordinates with associated meta-data can be instantly shared and accessed from any device.

For insurers, there is a wide range of opportunities to entice shopping customers on an AR-Cloud based platform by presenting personalized insurance products relevant to the items they are considering buying.

The AR ecosystem will be a great way to explain insurance plans to customers, provide training and guidance for employees, assist in real-time damage estimation, improve the quality of ‘moment-of-truth’ engagements. This affords modern insurance products to co-exist seamlessly along the buying journey. - Personification

Personification is a technology that is wholly dependent on speech and interaction. Through this, people can anthropomorphize themselves and create avatars that can form complex relationships. The Virtual Reality-based concept will be the next way of communicating and forming new interactions.

VR Applications such as accident recreation, customer education and live risk assessment, can help insurers lower costs for its customers and personalise the experience.

Brands have already begun working their way into this space, because as they see it — if younger generations are going to invariably use this technology for longer portions of their day for work, productivity, research, entertainment, even role-playing games, they will shop and buy this way too. - Flying Autonomous Vehicles and Light Cargo Drones

Although this technology is only a decade away from being commercially realized, the non-flying form is about to make its greatest impact since its original conception. Regulations are the biggest obstacle to the technology taking off, while its functionality continues to improve.

The Transportation & Logistics ecosystem is on the brink of a complete shift, which will create a demand for a wide array of insurance related products and services that covers autonomous vehicles and cargo delivery using light drones.

While automation continues to bridge the gaps, InsurTechs and Insurance Carriers will need to embrace ahead of the curve and adopt newer strategies to drive sustainable growth.

Also read – Key takeaways from the 2019 World InsurTech Report

Mantra Labs is an InsurTech100 company solving complex front & back-office processes for the Digital Insurer. To know more about our products & solutions, drop us a line at hello@mantralabsglobal.com.

Knowledge thats worth delivered in your inbox