Insurance customers are most vulnerable when they file a claim. Be it life or general insurance, claims are filed in distress. This is also a critical moment for Insurers. The claims experience they deliver determines customer loyalty, which also influences referral customers in the long run. In the Insurance industry, where products and pricing among the competitors are almost the same, customer experience becomes the main differentiator.

However, the catch is — we live in a multimodal world, which is an amalgamation of different generations and their unique preferences. Thus, Insurers need to comply with the capricious demands of different sets of policyholders. How?

AI can enhance the overall claims experience for your customers through faster and automated claims support, and multichannel integration. Let’s delve deeper into the details.

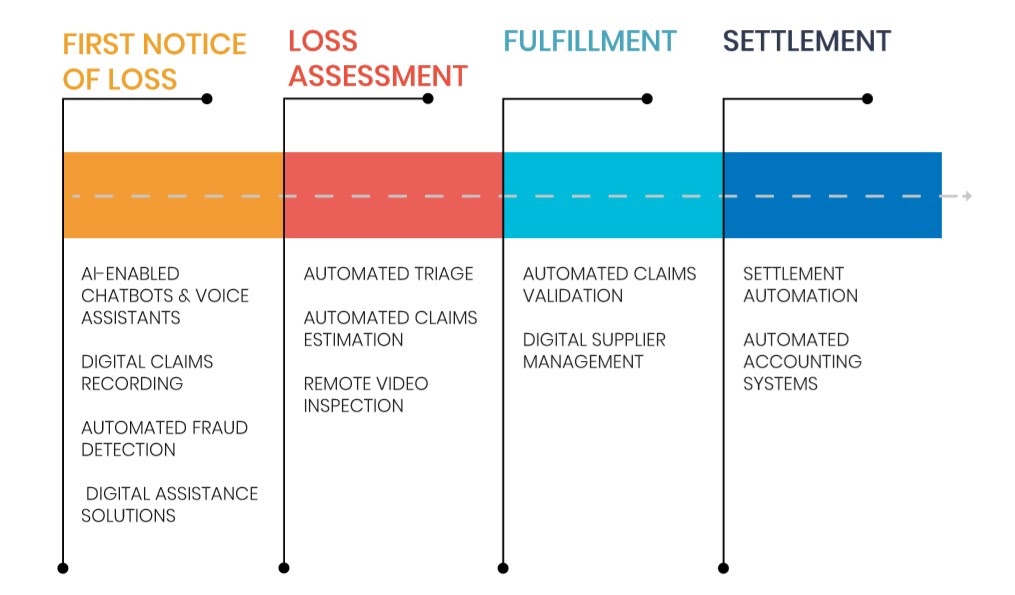

AI in claims management cycle

According to the State of AI in Insurance report, 74% of the Insurance leaders believe that the adoption of AI is most prominent for claims processing; followed by underwriting & risk management (48%), fraud prevention (39%), and customer and agent onboarding (22%).

AI has the potential to deliver a zero-touch integrated claims experience from the first notice of loss to the final settlement.

Delivering faster and integrated claims experience requires redesigning the entire claims journey from the customers’ point of view; where each touchpoint requires seamless digital interactions across the entire claims management cycle. It also involves optimizing back and front-office processes with intelligent automation.

Typically, claims experience for customers starts with the first notice of loss and involves certain stages for final settlement. Mckinsey reveals that nearly 80% of claims filed are manually reviewed by adjusters. The four main milestones in the claims settlement journey (as illustrated in the diagram above) include — first notice of loss, loss assessment, fulfillment, and settlement. AI can enhance customer experience at each of these stages.

- First Notice of Loss: Here, the loss has occurred. The customer is already devastated. Making help available as quickly as possible and in the easiest possible way, Insurers can ensure a healthy experience in an otherwise panicking situation. AI technologies like NLP chatbots, voice-assisted services, and digital claims recording can help in providing instant support. Also with machine learning-based fraud detection algorithms, Insurers can prevent fraud and further resources involved in assessment and fulfillment at the very beginning.

- Loss assessment has been a cause of delays in traditional insurance claims processes. Because, Insurers used to manually check damages, optimum repair costs, and then finally calculate the settlement amount. The longer it takes for loss assessment, the higher the brand value declines for the customer. With automated triage & claims inspection and remote inspection & evaluation, loss assessment can be made in a near-real-time.

- During the fulfillment process, AI can help insurers with automated claims validation and digital supplier management.

- Immediate settlement is what customers seek. With settlement automation and an automated accounting system, Insurers can provide instant settlement. For instance, Lemonade, a peer-to-peer insurance provider is able to settle claims in less than a minute!

Suggested read —

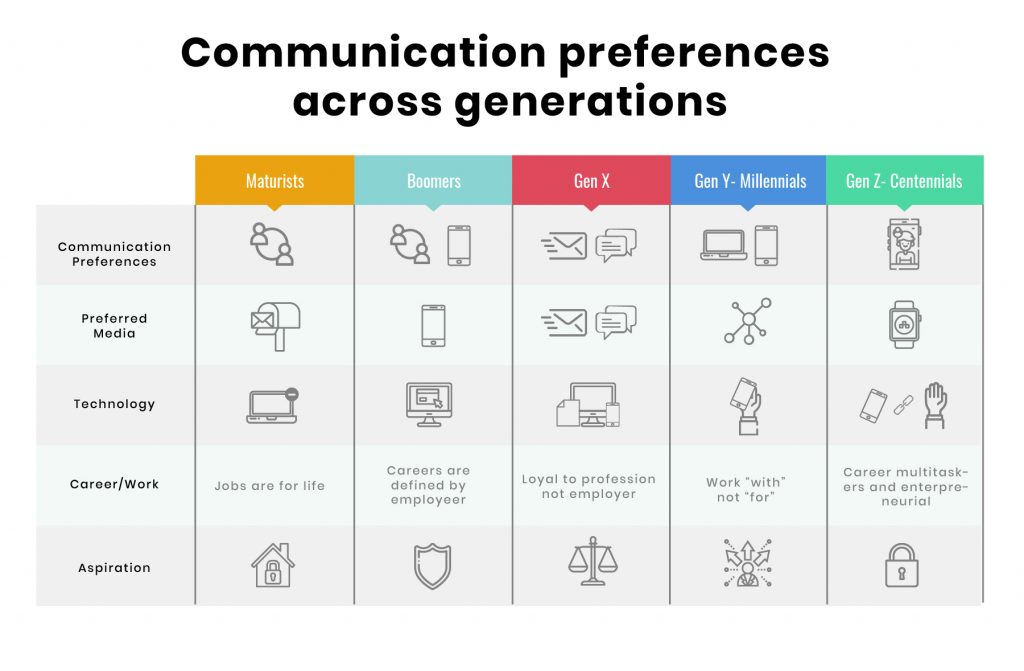

AI can enhance claims experience in multichannel Insurance models

Insurers have to deal with a broad spectrum of customers. There are Maturists — the technology non-users, then Boomers — who have just now started using technology, Millennials — the digital immigrants, and Gen Z — the digital natives.

These different sets of customers not only have different policy preferences but also the choice of platform they use. For instance, Maturists still rely on face-to-face communication with customer representatives to address their claims concerns. Whereas, Millennials want every resolution at the tip of their fingers.

The proliferation of communication channels has complicated Insurance carriers’ expertise in delivering experiences. But, the generation gap will always remain. Accenture’s recent study reveals that nearly 89% of customers use at least one digital channel to interact with their brand. Surprisingly, only 13% of customers say — the digital and physical experiences are aligned. Therefore, the best approach is to adopt a technology that binds well with the requirements of the past, present, and future.

AI and Machine Learning technologies make it possible to implement omnichannel and multichannel strategies at scale. However, there’s a fine line between omnichannel and multichannel communication models. Given the above case of different customer demographics, let’s confine to the benefits of AI in multichannel integrations.

- AI technology enables Insures to precisely understand different personas, policy preferences and customer journeys.

- Based on customer personas AI can augment the Insurance adjusters, claims managers, and other stakeholders’ knowledge about the claimants and their current situation. Thus, allowing them to address the circumstances with empathy.

- By capitalizing on the insights obtained through AI, Insurance decision-makers can redesign their IT infrastructure to scale customer experiences.

The crux

In the future, AI will play a crucial role in the completely automated end-to-end claims settlement process. This will bring a two-fold advantage — Insurers will be able to free human resources to provide emotional support to the customers. And, with the instant resolution, customers will get a high-touch claims experience.

We specialize in customer experience consulting with domain expertise in modern Insurance and InsurTech solutions. Drop us a word at hello@mantralabsglobal.com for claims experienced focused products and solutions.

Knowledge thats worth delivered in your inbox