On the 3rd day Apple didn’t have much to announce at WWDC 2016. Apple showcased some of the new updates and features and also spoke about their new Swift 3.0

The highlights of the day 3 were:

[section_tc][column_tc span=’12’][youtube_tc id=’https://www.youtube.com/watch?v=ZuCSgOxBvTs’][/youtube_tc][/column_tc][/section_tc]

Here are some highlight features implemented in Swift 3.0:

- Stabilize the binary interface (ABI). The Swift team is looking to create a more stable ABI allowing Swift to interact with different types of computers (at the binary level). Again, this points to Swift being ported to different computers.

- Complete generics. Swift uses generics (algorithms that are instatiated when needed) throughout its libraries, and Swift 3.0 will fully complete the implementation.

- Type system cleanup and documentation. Swift 3.0 will “Revisit and document the various subtyping and conversion rules in the type system, as well as their implementation in the compiler’s type checker.”

- Focus and refine the language. There’s little detail here as to how, but the Evoltuion Document notes that: “Swift’s rapid development has meant that it has accumulated some language features and library APIs that don’t fit well with the language as a whole. Swift 3 will remove or improve those features to provide better overall consistency for Swift.”

- API Guidelines. Swift 3.0 provides new design guidelines for developers building APIs.

An out-of-scope section details what Swift 3.0 won’t be doing in the future; in particular, it won’t be expanding out to C++ Interoperability, so C++ programmers won’t be able to integrate their code in the same way as Objective-C designers.

According to the document: “APIs. Interoperability with C++ libraries would enhance Swift’s ability to work with existing libraries and APIs. However, C++ itself is a very complex language, and providing good interoperability with C++ is a significant undertaking that is out of scope for Swift 3.0.”

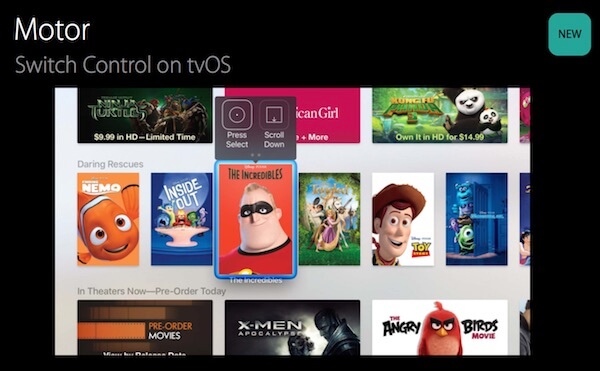

Switch Control:

Can now be used to interact with the tvOS interface using a single physical button, such as a switch on a wheelchair. There is both a cursor interface that highlights elements on the screen and an alternative interface with an on-screen remote. Accessibility users that already use Switch Control with an iOS device or Mac can automatically use the function on tvOS without re-pairing a switch.

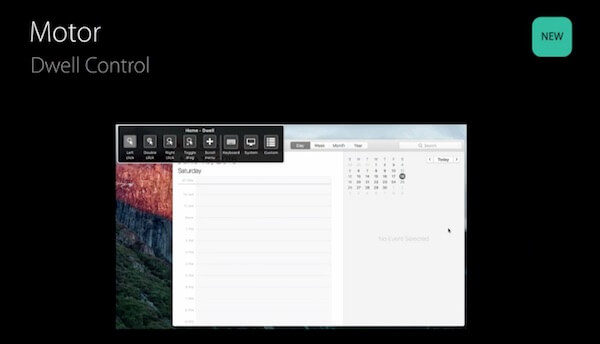

Dwell Control:

Is a new feature for macOS Sierra that enables users to control the cursor on Mac using assistive technologies and hardware like a headband with reflective dots or eye movements. When the cursor dwells on a certain location, a timer appears that expires and invokes a mouse click or other customizable actions.

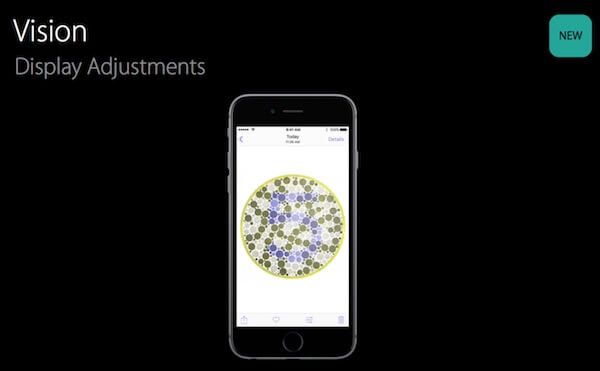

Vision:

Apple has made display and color adjustments and introduced the option to tint the entire display on Mac, Apple TV, and iOS devices, which can significantly increase contrast and reading ability.

Taptic Time

Is a new VoiceOver feature on watchOS 3 that uses a series of distinct taps from the Taptic Engine to help someone tell time silently and discreetly.

Magnifier:

Is a new systemwide iOS 10 feature that enables users to use the camera to magnify objects in their physical environment. Various color filters, such as grayscale and inverted grayscale, are supported to increase contrast.

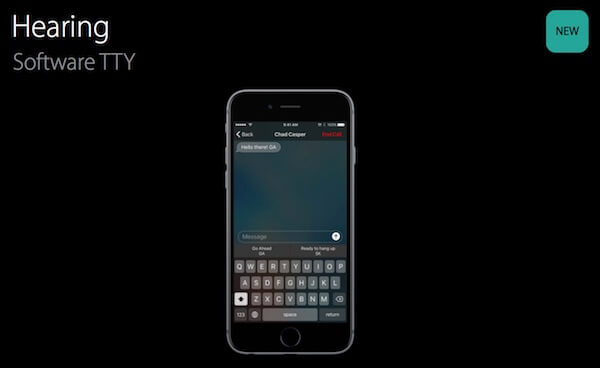

Hearing:

iOS 10 allows for Software TTY calls to be placed without any additional hardware. The calls work with legacy TTY technology and make it easy to dial a non-TTY number through your carrier’s relay service. There are also built-in TTY-specific QuickType keyboard.

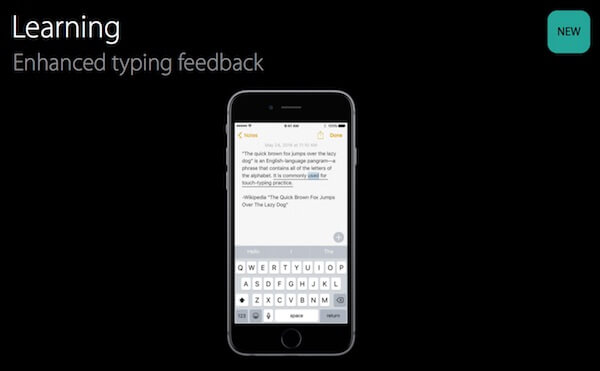

Learning:

iOS 10 has a number of enhancements designed to help people with dyslexia. There are improvements to Speak Selection and Speak Screen to help people better understand text that has already been entered, and there is new audio feedback for typing to help people immediately catch mistakes.

Day 3 was going slow in the beginning but these announcements made it exciting. The 4th day expectations are also high. For updates of 4th day stay with Mantra Labs.

If any queries approach us on hello@mantralabsglobal.com

Knowledge thats worth delivered in your inbox