For any operational effort across large organizations, a significant amount of time and resources are spent manually inputting data into downstream systems. These processes more specifically affect insurance practices that are deeply reliant on back-office processes. The bulk of the insurance workforce is condensed into operations and support functions (e.g. policy issuance and servicing). Here, data is typically unstructured and locked away in heaps of paper-based documents, emails, scanned images, excel worksheets, pdf, and word reports.

Typically in insurance, at least 90% of unstructured documents are manually processed, while an ‘Insurance Policy Administration System’ is on average between 15–20 years old — forcing them at times to lag behind their financial services peers.

To make the most out of the massive quanta of inbound data stored in siloed systems, firms have recently begun to take a serious look at streamlining data migration using AI-based tools. The burgeoning reality is that a tremendous amount of man-hours are wasted in repetitive tasks leading to increased processing times and slower through rates for insurance.

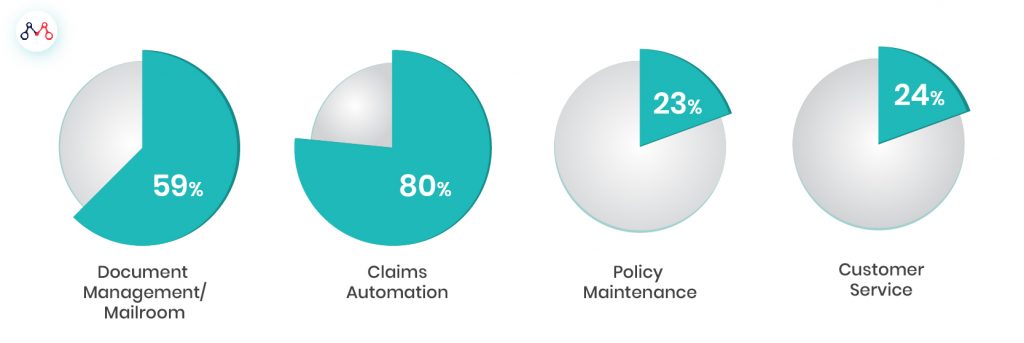

Proportion of Unstructured Data in P&C Insurance (%)

Source: SPS Data

AI Gets Holed Up In Silos

According to a recent IDG study titled the ‘Future of Work’, less than 50% of global enterprises have deployed intelligent automation technologies (such as AI, Cognitive Automation or RPA), while over two-thirds find greater difficulty in integrating these people, process and AI. Over fifty percent of enterprises identify siloed deployments and overwhelmed internal application development teams as long-term issues. This can create friction between teams operating in silos and those trying to derive insights from unstructured docs. Nearly a third of enterprises identified getting AI into production and live services as the single biggest challenge to overcome.

According to a McKinsey paper, intelligent process automation is at the core of next-generation operational business models.

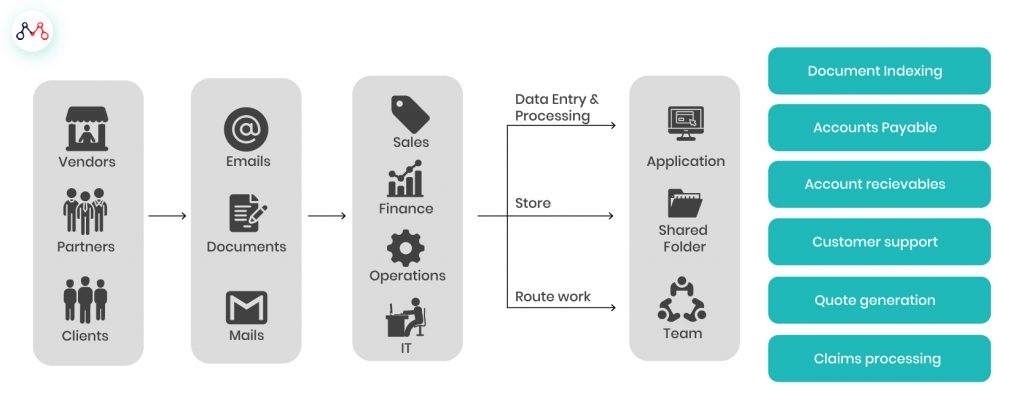

The Need For Intelligent Document Processing

Source: Imaginea

A New Platform

MantraLabs has launched a unique solution to address the insurer’s pain-point through an intelligent platform built especially for silos, The solution addresses several dependency issues and is built to scale, making it a vendor-neutral platform that doesn’t require deep coding skills. The christened solution is FlowMagic — a simple and easy to use visual AI platform for insurer workflows.

FlowMagic applies proprietary AI techniques, Machine Learning and NLP, to extract any target data from unstructured documents. At the recently convened 4th Annual Insurance India Summit and Awards 2019 held in Mumbai, Mantra Labs presented a live demonstration of FlowMagic’s unique capabilities. Mantra Labs CEO Parag Sharma took the opportunity while speaking in front of industry leaders and attendees, to showcase our true AI-first approach to solving insurance challenges. FlowMagic truly embodies the spirit of that approach in tackling the problems plaguing traditional insurers — such as reducing document delivery times to the back-office by 80%.

FLOWMAGIC DASHBOARD

Customizable Workflows

The platform is equipped with plug and play capability. Using quick drag and drop, one can create custom workflows to address the most pressing operational functions, such as insurance agent onboarding or verifying medical invoices. Mantra Labs has pre-built over 50 AI-powered apps for its users to take advantage of. The open platform also allows insurers to create their own apps that can be seamlessly integrated.

FLOW MAGIC’s IN-BUILT APPS

By leveraging machine learning, insurers can use FlowMagic to shift intensive operational functions into auto-pilot. The AI tool can automate the ‘classify, extract, and validate’ cycle for insurers and direct decision-ready insights straight to decision-makers.

Although declining, the Insurance field is still paper-intensive. Insurers are shifting towards AI-powered engines to replace unnecessary manned effort behind redundant operational tasks. These systems can bring about at least a 70% reduction in manual processing and 30% improvement in cost-efficiencies throughout the value chain.

To know more about how FlowMagic is helping insurance leaders cognitively automate complex processes, reach out to us at hello@mantralabsglobal.com

Knowledge thats worth delivered in your inbox