The need for and consequently the number of solutions for reading hand-written forms in an automated manner has been on a rise for as long as one could remember. Almost all businesses to varying degrees utilize paper-based forms that are filled by customers by hand. Most if not all of these businesses convert this handwritten information into the digital format. Depending on the technological sophistication or the size of the business this digitization might be done manually by one or more data entry specialists or through an automated solution.

It’s easy to see how the manual route may not be an ideal solution for medium or large-sized business. Some of the apparent drawbacks of manual document processing are:

- The cost of having data entry specialists quickly add up as more documents need to be digitized necessitating adding more resources.

- Manual data entry is a slow process.

- Manual data entry is error-prone and requires a quality inspection which is costly and not fail-proof.

Many businesses have realized this and have transitioned to some form of a partially or fully automated solution to this problem. However, it’s not all rosy for these businesses either. The problems these businesses face is primarily related to the accuracy of the current solutions in the market.

Shortcomings of Existing Hand-written Document Processing Solutions

The industry average for ICR (Intelligent Character Recognition) accuracy at the character level is about 70% and it will drop significantly if measured at word level which is what matters at the end. Such automation may allow for reducing the number of data entry personnel but with such a low level of accuracy, there will be a need for increased quality check resources, which are often more expensive than data entry resources hence diluting the cost-benefit of automation. Moreover, since the quality check is a slower process than data entry, this kind of automation doesn’t even address the speed problem.

Some of the reasons that result in a low level of accuracy among existing document processing solutions are:

- Poor form design

- User input not in line with the format

- Noisy images

- Misaligned documents

- Low-quality scanning of documents

- Spelling mistakes by the user

- Overwriting/corrections by user

While we may not have control over some of the above factors such as form design and user input, we can definitely improvise the data extraction models to account for the other factors such as image noise, misalignments, spelling mistakes etc.

Our ICR Solution

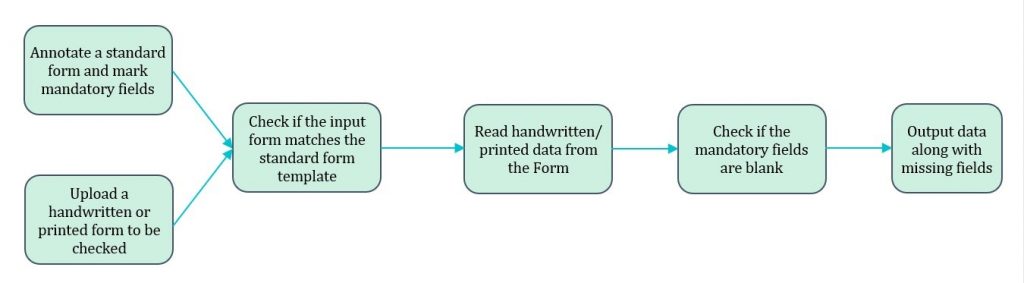

The Document Parser solution in FlowMagic provides an intuitive user interface where data can be extracted from any standard form in three easy steps:

Step 1: The user annotates the form (this is a one-time exercise for each new form) using an easy and intuitive UI. During annotation, each input field can optionally be labelled as mandatory. The user can specify the datatype for each field as alphabets, numeric or checkbox and also set the context for the field e.g. Name, PAN, City, Car Make, Date etc. Once done, the saved template can be used repeatedly for reading forms of the same type as long as there are no changes in the form design. In case of a change, the saved template can be easily modified.

Step 2: The user uploads one or more forms and chooses the corresponding template (from previous annotations). The system automatically extracts data from the forms.

Step 3: The system exports the output in CSV, XML or JSON as desired by the user. If any field was marked as mandatory during annotation, the system also outputs a list of all mandatory fields that are blank.

Salient features of ICR Document Parser

- The standard form being annotated can be any number of pages. The input form need not have the same number of pages. If there is a mismatch between the pages in the input form and the template, the system does a matching and runs the data extraction on matching pages only. This also means that the input form need not be sorted correctly.

- The system can read handwritten as well as printed forms.

- The system corrects for minor misalignments during scanning of documents or documents scanned in the wrong orientation.

- The system has inbuilt dictionaries for various contexts such as Name, Cities, States, Countries, PAN, Profession, Marital Status, Relationship, Amount, Car Make, Date, Gender.

- The various data types supported by the system are alphabets, numeric, alphanumeric, checkboxes and special characters.

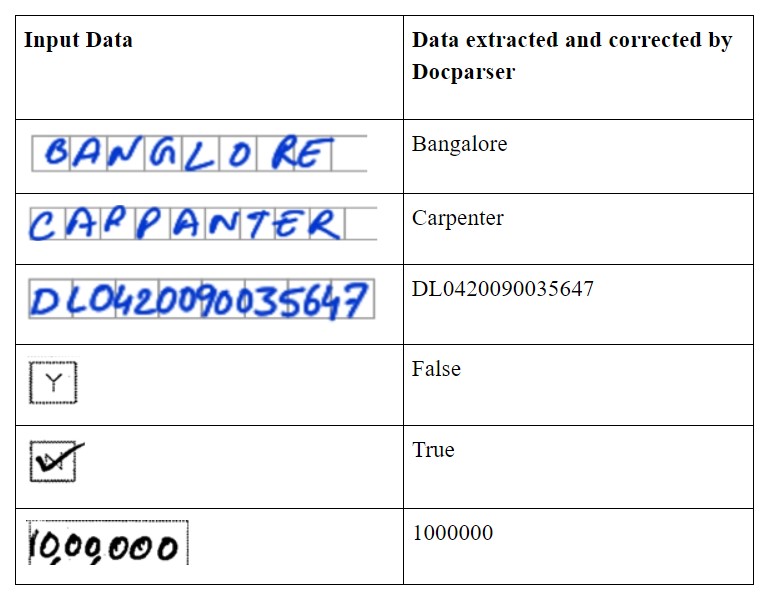

- The system corrects user errors or scanning issues by performing data type and dictionary checks (see examples below).

- The system checks for mandatory fields to make sure the form is completely filled.

Examples of Data Read/Corrections Made by an ICR

Benefits of ICR

Flexibility – you can annotate a wide variety of forms with complex inputs and data formats using the multiple data types and contexts built into the system.

Speed – Both annotation and data extraction are very user-friendly and fast. The system can extract data from a five-page form in under 30 seconds.

Scalability – The system is highly extensible and once set up for one type of form can easily be scaled for multiple forms or to process documents in bulk of the same format.

Accuracy – The character level accuracy of our model is over 90%. Word level accuracy depends on the form design and quality but in general, varies between 75% and 85%.

Workflow

No matter what solution you use, you can always benefit from these best practices for form design to improve the accuracy of your ICR:

- Have all instructions in bold at the top of the form.

- Instruct the user to write clearly in block letters as the form will be processed by a machine.

- Provide examples of how to enter data wherever there is a scope for confusion.

- Instead of providing a free form space for data entry, it provides a clearly marked space with a specific location to enter each character.

- The overall space should be large enough to contain the requisite data to avoid user writing outside of this space.

- Have enough separation between the space for two fields to avoid overlap.

To learn more about how FlowMagic can improve the accuracy and speed of your document digitization/Intelligent Character Recognition (ICR) or discuss your broader AI goals, please get in touch with us at hello@mantralabsglobal.com.

Knowledge thats worth delivered in your inbox