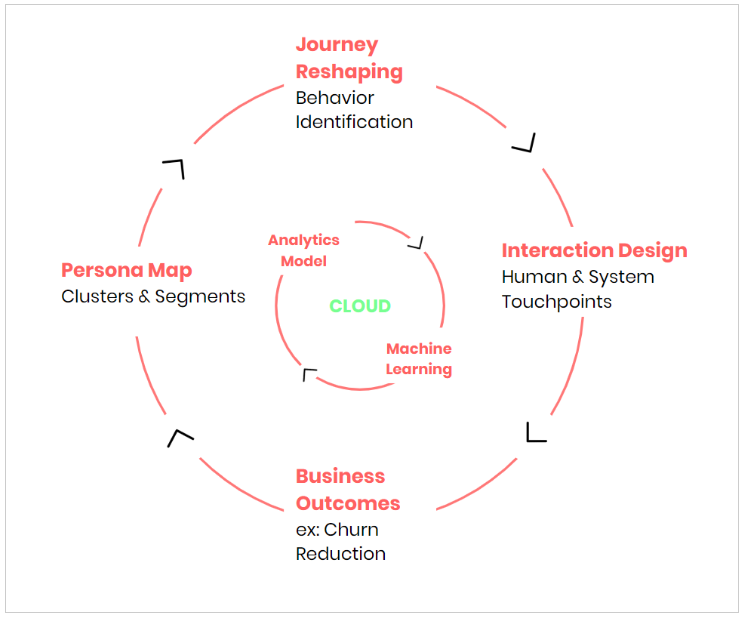

Predictive Analytics is disrupting the business-consumer dynamic. To improve engagement with their customers, organizations have begun identifying potential segments (predictive audiences) that are likely to convert with them. Modelling data to learn about the potential ‘new’ customer, their preferences and spending behaviour has already proven demonstrably higher conversion rates and lower churn rates. In fact, the market value for these types of services is expected to touch $12.4B by 2022.

As we transition into a semi-connected world supported by global IoT sensors and devices, the real-time analysis of past and future-probable events is evolving business actions more prescriptive in nature. Every touch or interaction triggered by an individual customer is a data point that is captured, stored and examined for insights. Data is an interminable asset that continues to grow exponentially while storage likewise is getting cheaper each year. With nearly infinite cloud computing and scaling it becomes much easier to process these extremely large amounts of data.

But, are customer journeys actually getting better? Are these journeys still reactive? How much of the world has moved to a predictive-first approach? and, has it really helped CXOs address their business goals? Let’s evaluate the state of real-time predictive trends that are being put to use by global enterprises.

First, let’s look at some easily identifiable use cases that have some verifiable results.

- Identity Resolution — understanding the individual persona consistently and accurately across -domain, -device and -channel, while maintaining stringent privacy compliance. This approach typically gives you a singular view of a potential customer. (ex: LiveRamp, Full Contact)

- Customer Journey Data Integration — data integration transcends the siloed view of traditional web analytics. For these multiple integrations like web, mobile app, email, social media, CRM, call centre, device, etc. are essential to understand customer flow across channels. (ex: FirstHive)

- Customer Segmentation and User Experience Recommendations — It is done using clustering models to perform highly accurate segmentation creating micro-segments and tracking each customer as they shift from one segment to the other. (ex: Lattice-Engines)

- Personalization — It marks which marketing campaigns, channels, touches, and behaviours users are responding to, and contributing to a business outcome, using a machine learning-based attribution. (ex: Everage)

- Lead Scoring, Prioritization & Allocation — It helps identify which leads will convert, churn and which customers will buy one or more products for a cross-sell or upsell. (ex: Mantra Labs LCA, Pardot)

- Automating Prediction & Rule Setting — Use automated machine learning for predictive modelling. Enables rapid iteration cycles. (ex: Nokia, DataRobot)

The total number of journey interactions the world over is an unquantifiable number. It is predicted, though, that there will be nearly 2MB of data created by every individual in 2020, every second. With all this data to go around, why are companies so invested in them? It’s because customer experience has become the number one marketing activity of 2019, and will continue to rank highly over the next five years.

In fact, Gartner predicts by 2019 more than 50% of organizations will redirect their investments to customer experience innovations. For SaaS enterprises, there is a lot to gain. Research indicates CX initiatives can double an organization’s revenues within 36 months, and this extra share will come from the customer’s wallet. Good CX will create real value for your customers, which means they will spend more.

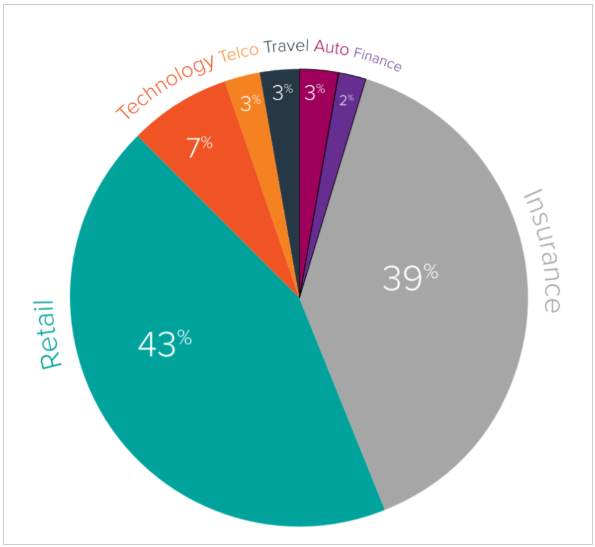

According to Accenture, 87% of organizations agree on traditional experiences no longer satisfy customers. To counter this, Businesses are now investing in customer journey management. Interestingly, insurance (39%) is showing the highest adoption rates outside of retail (42%). The tech industry comes up third behind them at 7%.

Customer journeys are orchestrated into three: Acquisition, Conversion and Growth. Majority of journeys are identified as growth journeys (64%), and typically run for nearly 34 months on average.

Has it made a difference in Experience?

Yes, and there’s data to support it.

The predictive journey allows businesses to place real-time marketing bets on the behaviour of the customer. We don’t have to look any further than the example of Netflix and its impressive predictive recommendation system. Almost 80% of the content watched on Netflix is attributed to recommendations. A robust predictive analytical engine working behind the scenes is able to perform two critical aspects of the customer life cycle: Needs forecasting and churn reduction. The system is estimated to save Netflix at least $1 billion each year in customer retention.

What about the Impact to Business Goals?

The short and long answer is yes.

According to a salesforce study, the key to building highly personalised journeys begins with predictive intelligence. The report found on average, predictive intelligence recommendations influenced 34.7% of total buys. The lift in conversion rate within the first 36 months is around 23%, which is significantly high. Imagine what 23% more in conversions can do for any business. The real value from predictive intelligence is that it gets more intuitive with time. After 36 months of implementation, there is 40.3% more influence in revenue from this technology.

For future engagements, customers want businesses to proactively reach out to them and offer them tailored products and services that will be highly relevant to their needs. On the other hand, businesses prefer to study their consumers by looking at their data under the strict regulations enforced in data privacy laws — because it will certainly avoid long term risk to their business models. The results are clear: A predictive journey is the only way forward.

Mantra Labs is an Insurtech100 company creating AI-first products and solutions for the evolving digital enterprise. To learn more about how we are using predictive journeys to create the Internet of Intelligent Experiences™, reach out to us on hello@mantralabsglobal.com.

Knowledge thats worth delivered in your inbox