Conversational AI is the technology that makes human-computer conversations emotionally intelligent, and less scripted. It allows AI-led chatbots to interact with people in a human-like manner, thereby keeping this human-computer interaction as natural as possible, yet high on its emotional quotient.

Conversational AI is trained to comprehend and engage in contextual dialogue using Natural Language Processing (NLP) and additional AI algorithms.

Conversational AI is one among a few other new-age technologies that continue to emerge on the scene including augmented intelligence, edge AI, data labeling, and explainable AI.

How Conversational AI helps with better CX?

Conversational AI uses a combination of natural language processing (NLP), machine learning (ML), speech recognition, natural language understanding (NLU), among other language technologies to process and contextualize voice or text messages and accordingly respond with the most suitable answer.

Through NLP, the computer can ascertain the intents and entities of the customer on the other end, while identifying statistically significant patterns that it has been trained on. This enables it to learn your business needs and get smarter with time, thus helping a more evolved customer experience.

Why is it important to invest in Conversational AI?

Gartner had predicted that, by the year 2020, customers would manage 85% of their interactions with businesses without interacting with a human. It’s predicted that in the upcoming decade, basic automation and apps will be replaced with advanced AI technologies with an objective to improve the overall customer experience metric by proactively gauging the customer’s needs and intent and engaging on an emotional level.

An increased amount of AR and VR innovations across industries are set to be the norm by 2025 as part of an expected customer experience offering.

“The greatest advantage of having a conversational AI solution is the instant response rate. Answering inquiries within an hour means 7X greater probability of converting over a lead. Clients are bound to discuss a negative encounter than a positive one,” reports AnalyticsInsight.net.

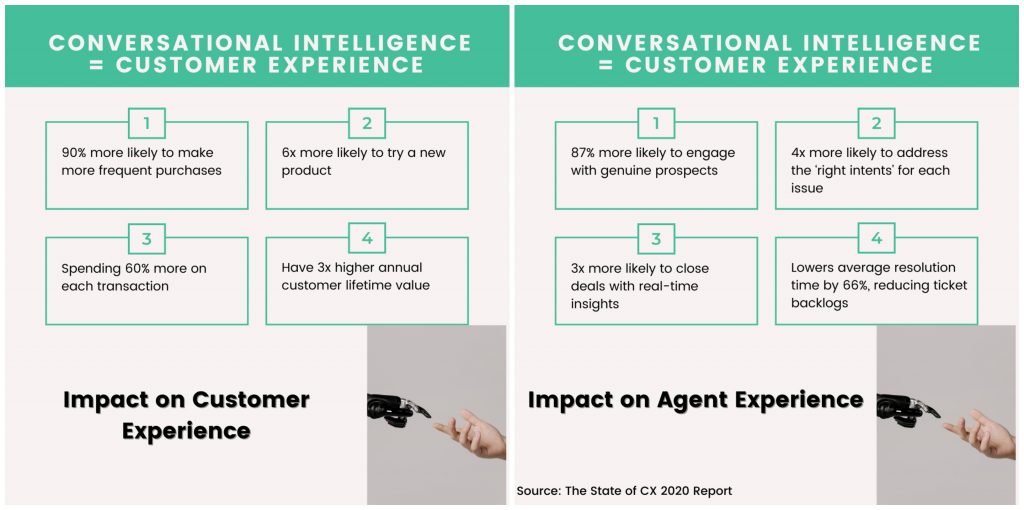

Image Courtesy: www.kore.ai

Conversational AI in Insurance

Conversational AI is an ideal addition for service, healthcare, insurance organizations to name a few, as it helps with helping support agents streamline their work, take after-call notes in the CRM system, or complete the missing details that will help by building a seamless customer service system. The technology also helps predict or react to changing call patterns and/or any real-time guidance the agent might require.

Additionally, customers can first engage in self-service aided by conversational intelligence to save time, improve efficiency and get the desired results sooner. While rebranding Care Health Insurance’s (erstwhile Religare) website and app, Mantra Labs deployed Hitee, their AR-based virtual support that helped with the first-level solution for the customer and in turn, led to higher New Business Conversions by a factor of 10X and an overall drop in customer queries over voice support by 20%.

2021 Customer Support Trends Report says that inferior customer experience costs companies at least $62 billion annually. 56% of support leaders shared that their current chatbot implementations don’t carry intelligent tools in this report, and customers are increasingly demanding convenience from businesses they interact with.

Intelligent chatbots make apps simple, and more human to use. Their USP is to create device-agnostic experiences across channels, thus becoming a key factor in driving intelligent customer experiences.

Knowledge thats worth delivered in your inbox