The global chatbot market is expected to reach USD$ 1.25B by 2025, and generate roughly $8B savings globally by 2022 itself. With chatbots disrupting a wide variety of industries already, the technology is becoming more popular in a variety of business use cases – especially within the insurance sector.

Chatbots are becoming more advanced

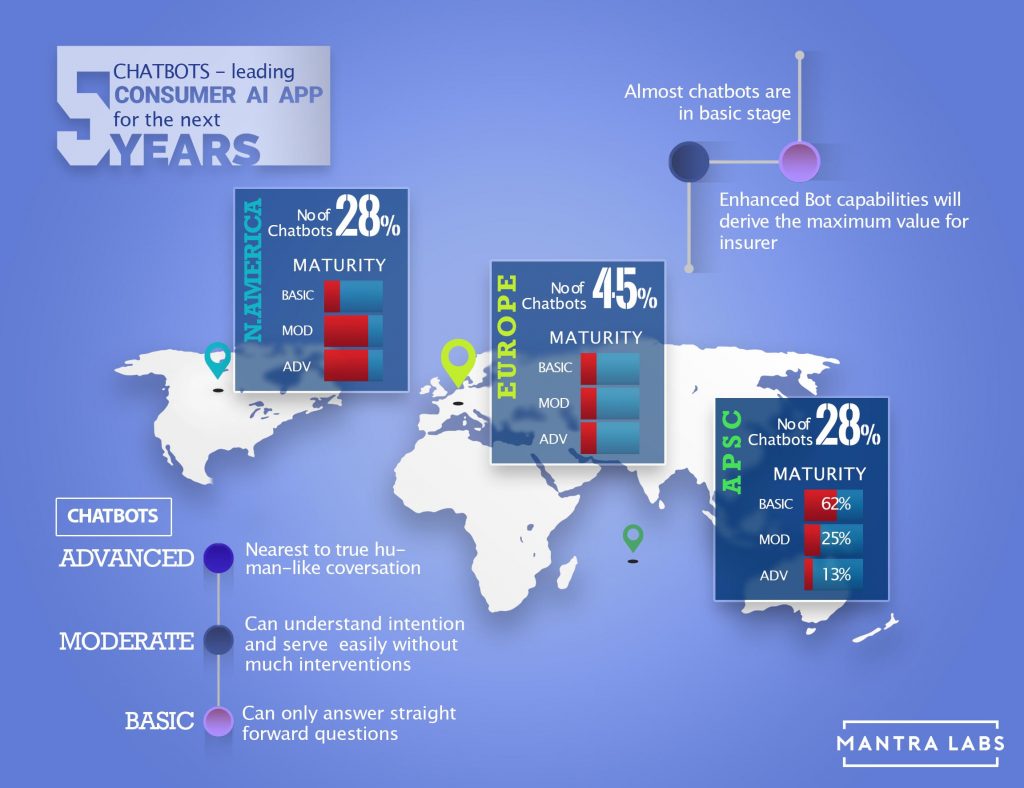

Chatbots are a natural extension of the push for self-service capabilities. Yet in spite of its growing popularity, according to a recent white paper published by Cognizant Research, almost 60% of insurers surveyed worldwide are yet to implement a chatbot. According to Cognizant’s research (validated with our own internal findings), bot capability is derived from the maturity of the bot; either basic, moderate or advanced.

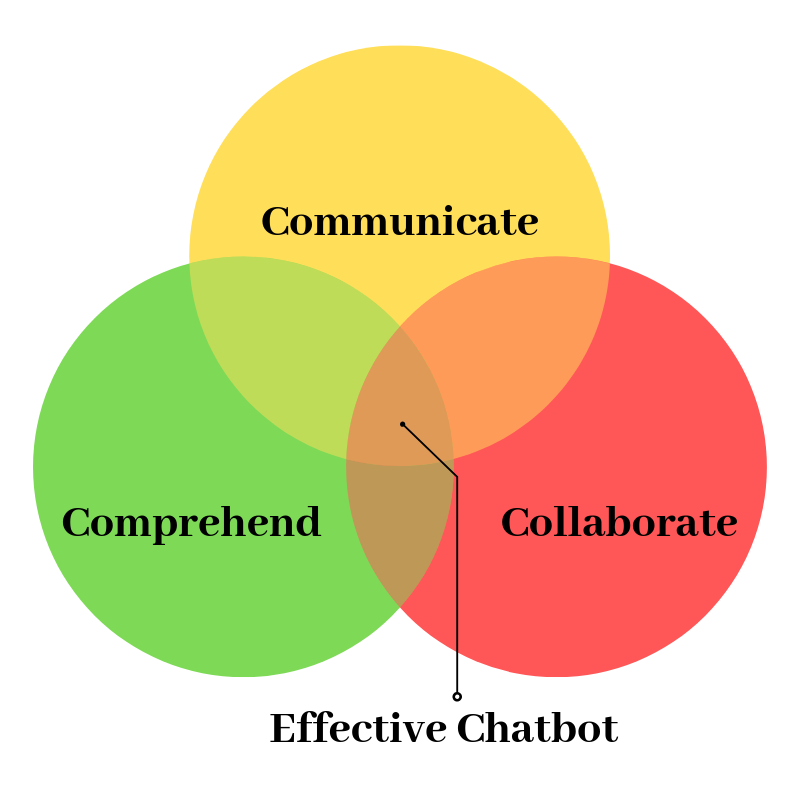

Based on this spectrum, ‘basic’ implies that a bot is mostly rules-based and can follow only simple instructions often deferring to a human, whereas those bots that are closest to a true human-like conversation, are classified as ‘advanced’ in terms of their capability. The maturity level of the bot is determined by their performance and their ability to Communicate, Comprehend and Collaborate with the user, providing utility across the value chain. These three C’s are key factors in distinguishing an effective bot from an unsatisfactory one.

Of insurers that have utilized chatbots in their operations, a majority 68% utilise only a basic form of the technology. While insurers have already benefited by saving costs and reducing customer servicing time using them, there is still significant opportunity for the uptake of more capable, reliable and intelligent bots to be deployed across the insurance value chain.

Europe has the highest volume of basic maturity chat bots among insurers at 60%. Asia along with MEA promises the most potential in terms of size and CAGR to adopt chat bot technologies over the next five years. North America is still the largest consumer of ‘advanced’ bots in insurance compared to all other regions.

Limitations to overcome

Insurers need to focus on these limitations faced by chatbots to realize their business imperative.

- Need of human-centric interface: Most of the time, interactions with chatbot are still robotic, providing the end-user with a frustrating non-human centric experience.

- Inability to contextualize conversations: Bots are programmed to follow a specific sequence or an algorithm, causing an inability to understand the nuances of human language – that results in an unfulfilling and an inauthentic experience.

- Scalability issues: Developers need to anticipate and program the bot according to the exponential rise in the amount of traffic that the bot might handle.

- Privacy and data protection: Data is both an asset and a liability. Since customers often give away personal data while conversing with a chatbot, insurers need to prioritise their privacy and data protection regulations for that region.

Opportunity Landscape for AI-enabled Chatbots

Chatbots can be leveraged for both simple and complex insurance processes in order to create definitive business value. Distinct successes have been noted in areas of:

- High volume or pre-purchase area: Chatbots are mostly being implemented where the customer traffic is the highest, i.e the Pre-purchase phase of the Insurance Life Cycle leading to reduced human-based involvement and higher cost savings.

- Property and casualty interactions: Chatbots have found great acceptance in the field of property and casualty interactions, primarily in pre-purchase activities and in the financial-need analysis.“Haven Life Insurance, a start-up backed by MassMutual, is using chatbot technology to calculate customer’s coverage needs and offer estimated monthly rates for term life plans accordingly”

- Troubleshooting and customer education: Troubleshooting and customer education is in its elementary phase and companies are continuously enhancing their capabilities to move from informational to transactional approach. “PNB MetLife’s chatbot started off as a basic customer engagement bot, hosting a rule-based, unidirectional health quiz; however, the company is incrementally converting it into an end-to-end cancer care protection tool.”

AI in Insurance will value at $36B by 2026. Chatbots will occupy 40% of overall deployment, predominantly within customer service roles.

DOWNLOAD REPORT

Insurtechs will lead the pack

Among other reasons for the large-scale implementation of chatbots, is that insurtechs predominantly target the tech-savvy millennial and Gen Z population who are more open to change and disruptive innovation. Positive customer experiences are directly proportional to twice the referrals, thereby expanding business scope by breaking traditional customer-interaction limitations.

Reimagining Insurance with Chatbots

The insurance industry has reached a revolutionary crossroad that mandates insurers become digitally agile. Over the next few years, chatbots are set to bring about a massive change to the industry and Insurtechs are leading the way in bringing AI-powered chatbots to the insured customer.

- Lemonade: The NY-based insurtech relies on its app-based chatbots, backed by AI, that can craft personalized insurance policies & quotes for customers, and respond swiftly to a variety of customer queries and process claims.

- Next Insurance: The insurance provider launched a chatbot via Facebook Messenger through which small businesses can obtain quotes and buy insurance.

- Trōv: The company has integrated a chatbot into its mobile app that handles customer queries and claims by seamlessly gathering incident related information from the customer.

- LeO: The insurer recently launched a chatbot which helps schedule calls and meetings, collect leads and answer customer questions automatically – allowing agents to focus on other tasks.

- Religare: It’s one of the top health insurers in India and a part of major financial service conglomerate. The company has integrated an AI empowered insurance chatbot, that focuses on learning from actual human interactions over a question-answer driven format to build a more intuitive chat based sales funnel.

There is a direct relation between the positive Customer Experience provided by the chatbots and the hike in the revenues. Almost one-third of the insurance business is expected to be generated via digital channels in the next 5 years. The companies that leverage AI-driven customer data for chatbots shall flourish far into the future.

Knowledge thats worth delivered in your inbox