Innovation and Disruption are causing a paradigm shift in the Indian insurance industry today.

The industry is expected to touch USD 280B by 2020. With the advent of InsurTech, Blockchain, Big Data, AI, IoT, AR amidst changing consumer preferences — there has been a holistic approach to insurance automation, challenging the traditional concepts making insurance a battleground of the old and the new.

The insurance penetration in India is only 3.7% as a percentage of GDP compared to the World average of 7%. However, changes in the demographics, technology and business models have opened up a plethora of opportunities for the Indian insurance industry which is growing at a rate of 11% annually. This has marked the beginning of breaking out of an emerging state into broader impact and use, enabling insurers to expand into more ecosystems than ever before.

The recently concluded “4th Annual Insurance India Summit & Awards 2019” with the motto of “Integrating Technology & Big Data to Enhance Distribution Channel, Marketing Strategy & Customer Experience” — aimed at having robust and key focused area discussions on the inherent insurance challenges. IISA creates a platform for one of India’s largest gathering of Insurance leaders and Innovators.

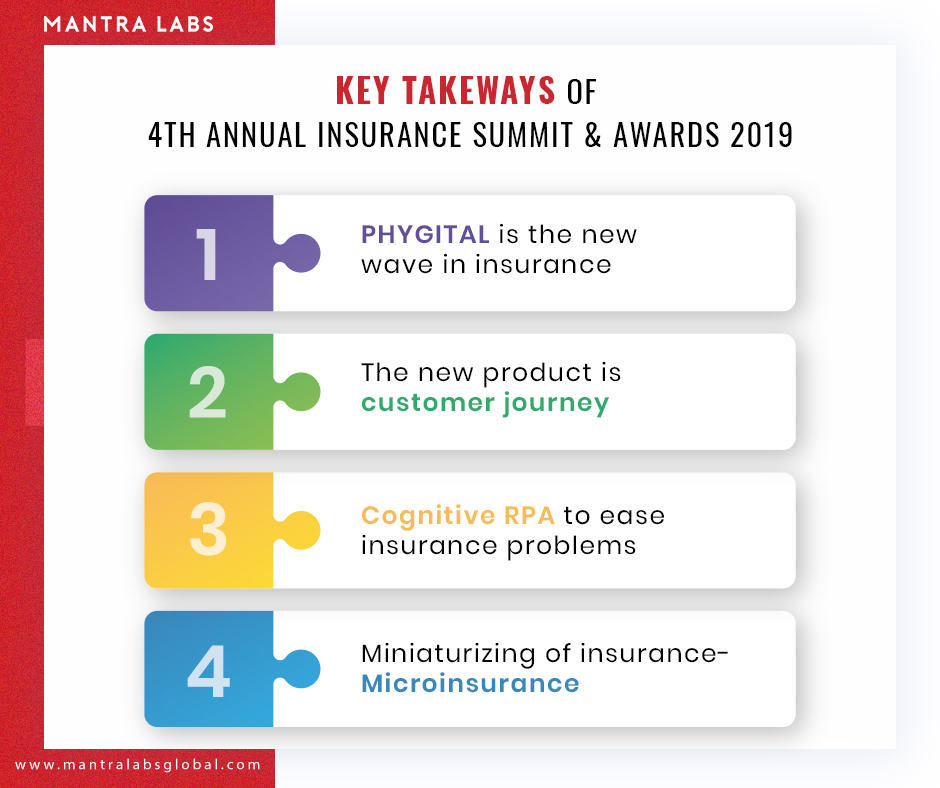

Let’s have a look at the key takeaways of the 4th Insurance India Summit and Awards 2019.

PHYGITAL is the New Wave in Insurance

There is still a trust deficit between the customers and insurance companies, primarily due to highly suspect products with unrealistic returns being sold in the past decade. Customer Expectations are very different online and offline for the same customer.

In such a moment of crisis, the focus on Digital cannot be limited to just customer acquisition, as Customer engagement is the key.

Phygital, i.e Physical + Digital, is the concept that brands and businesses are using as a sales strategy to amplify the yield. Phygital as a paradigm is challenging the cascaded approach of traditional insurance and bridges the gap between both the worlds effortlessly.

With the help of data visualization, one can help increase customer interactivity, analyze product performance, understand data consumption objectives and thereby improve customer experience. The objective is to provide the ultimate 360-degree experience. This includes a focus on relationships, lifecycle, and even life stages.

Click to know more on, ‘Scope of Phygital in Insurance‘.

The New Product is About Customer Journey:

Customer Expectations have changed significantly over a short period of time. The forecasted move to real-time interaction is indeed here.

Source: SMA white paper

Customer journeys in insurance are often complex. It involves multifaceted relationships, multiple locations, and various insurance needs. Due to these complexities, 70% of Indians working in rural areas generate 40% of India’s income but have much lower access to the products and services.

Insurance companies are looking at creating efficiency across the Value Chain. Thus they are now also looking at creating or leveraging existing eco-systems e.g. E-Commerce, to widen the footprint. Instead of the focus being on removing agents and selling directly, Insurance companies are now focused on empowering agents.

According to recent SMA research, 85% of insurers report that customer experience and engagement is a top strategic initiative, ranking it as #1 – a significant shift from #4 and #5 in past years. This is good news for the industry, as it points to determination and focuses to place the customer first.

Cognitive RPA to Ease Insurance Problems:

Data is a vital ingredient for going Cognitive. The cognitive insurance business is the one that allows underwriters to be equipped with a repertoire of AI-enabled tools, empowering them to make better and more informed decisions about their customer.

RPA tools currently occupy the Peak of Inflated Expectations in the Gartner Hype Cycle for Artificial Intelligence, 2018.

Cognitive RPA is widely adopted in various industries, insurance included. “End-user organizations adopt RPA technology as a quick and easy fix to automate manual tasks,” said Cathy Tornbohm, vice president at Gartner. In the insurance industry automation of the day-to-day tasks would potentially reduce cost, time consumption and increase accuracy, quality, and competency.

Miniaturizing of Insurance — Microinsurance

Insurance coverages are the greatest aid against the consequences of risk exposures and also provides support for the insured’s credits. However, 65% of Indians below the age of 35 don’t want to buy Health Insurance.

In order to provide “insurance for all”, the Insurance Regulatory and Development Authority of India (IRDAI) has a specialized category of insurance policies called micro insurances. It promotes bite-sized insurance coverage among Gen-Y and the economically vulnerable sections of society.

Click here to know if ‘ Microinsurance actually works for the economically vulnerable sections of India.‘

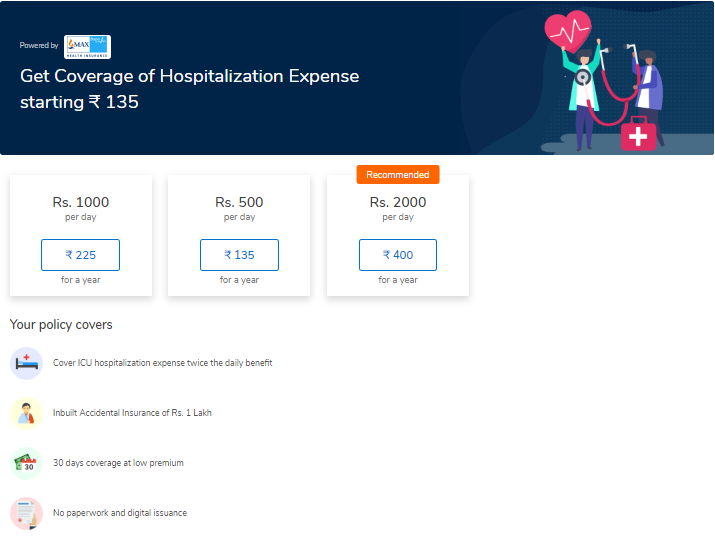

Micro-insurances are easily affordable over the bulky insurance schemes. Recently MaxBupa, a standalone health insurer partnered with Mobikwik, a fin-tech platform to promote affordable and convenient microinsurance products. Priced at an annual premium of ₹135, their product, HospiCash will offer ₹500 per day hospital allowance for up to 30 days in a year.

Click to know more about how ‘ AI can help bridge customer gaps for microinsurers‘

The non-partisan agenda of the Summit was to explore challenges and their deterrents like technology integration in insurance, customer engagement, and customer experience. The discussions were designed to draw out clear outcomes for the industry together – in order to realize growth, customer satisfaction, profitability and deliver definitive business value.

Mantra Labs was proud to be the business development partner at the successful Summit. We were honored to partake in the insightful conversations and gather appreciation for presenting ‘FlowMagic’ – our Visual AI Platform for Insurers, from all the insurance industry experts present.

We hope to see you again, in the next edition!

To know us in person, drop us a Hi at hello@mantralabsglobal.com

Knowledge thats worth delivered in your inbox